Secure uploading of files from an iOS or Android app to S3

Why?

Most of the mobile applications these days require some form of a backend. Usually it is used for something trivial, like maintaining user profiles, settings and scores etc. However, most often than not, the application needs to upload some files to your server. The following tutorial will show how to do it securely and efficiently using AWS S3. Why spawn powerful servers that can handle huge amounts of traffic when all they do is get the files and store them on S3 anyway? Files can be uploaded to S3 directly, and S3 infrastructure can scale with our application and adjust to the amounts of traffic required. All we need to do is implement a simple lightweight API endpoint that will instruct the client where to upload the heavy things and let S3 do the heavy lifting.

This solution has the following characteristics:

- The uploading is a 2 step process, first the client requests a permission to upload, providing the server with the intent and the metadata of the file uploaded. This is especially helpful in applications where the server should know about the ongoing uploading while it may take the client some time and possibly few attempts to upload the data (due to slow or unreliable cellular networks).

- The upload links are given upon request and only to authenticated users (outside the scope of this tutorial), they are single use and time limited.

- Absolutely no AWS access/secret keys are embedded within the application. Even as hackers reverse engineer our application, they will not gain any knowledge or keys to try to poke around our servers / backend storage.

- At the same time the mobile application is left completely unaware of the complexities of uploading to S3. It doesn’t need to sign the requests, or provide special headers. No client AWS libraries are required. All the application does is uploading raw data to a URL through either a POST or a PUT request. We can use any http method or a library of our choice for that.

- This mechanism gives us the flexibility to expand and change our backend architecture in the future without changing or deploying new version of the mobile application. If we decide we do want the uploaded files to hit our servers first or route them to a different cloud provider, we simple change the URLs returned from the API in our backend and the files will flow through a different route.

This 2-step approach obviously has some drawbacks, e.g double the API calls, however in some cases it may bring unexpected benefits. For example, at Bugsee, we upload and process thousands of crash reports generated on millions of devices. Being able to replay the last minute of everything that happened in the app, including video, network traffic, console logs, etc turns out to be really useful for our clients, and helps them resolve bugs and crashes they wouldn’t otherwise be able to root cause. However, for many cases, a single recording is enough, having thousands different sessions of the same particular crash doesn’t bring any additional value, no developer is going to watch them all. The 2-step approach allows our client SDK first to register and intent that containing a metadata and some unique signature of the crash and Bugsee backend has the luxury to register the crash, increase the statistics counters but to instruct the client to drop and never upload the recording itself. This saves huge amounts of traffic both for the users and for us.

With that in mind, let’s get down to business:

Preparing AWS resources

First we will create a new S3 bucket for this and name it “bugsee-example”. No need to make it public or change its settings, default is good enough for what we are about to do.

Create a new AWS user and give him the rights to only upload files. In order to achieve that, attach the following policy:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"s3:PutObject"

],

"Resource": [

"arn:aws:s3:::bugsee-example/upload/*"

]

}

]

}The user can only upload files (s3:PutObject action) to the “bugsee-example”, and only to the the /upload/ subfolder. He can’t get any other files, he can’t even list the contents of the bucket. That was exactly our intention.

Building the backend

For the sake of this example we will build a new node.js backend server from scratch. The backend will only handle one API route (POST /users/:userId/object), which will be used by our mobile application to post the intent to upload a file to our backend and get back the pre-signed S3 URL for the actual upload.

If you already have a backed for your application you might easily incorporate similar approach into your existing framework. Implementing this code in any other language is pretty straight forward too, AWS SDK is available for all the common languages.

Since S3 will handle the actual uploads for us, we may decide to go completely server-less and use Amazon Lambda to handle the intent as well. This exercise, however, is outside the scope of this tutorial, if you are interested in building pure server-less backends using Amazon Lambda you might refer to this tutorial.

Create a new folder and initialize new node project:

mkdir server; cd server

npm initInstall the required node modules:

npm install --save express

npm install --save aws-sdk

npm install --save uuidCreate the main body of the application:

var express = require('express')

var AWS = require('aws-sdk')

var uuid = require('uuid')

AWS.config.update({

region: 'us-west-2',

accessKeyId: 'AKIA************',

secretAccessKey: '******************'

});

var S3 = new AWS.S3();

var bucket = 'bugsee-example';

var app = express()

app.post('/users/:userId/objects', function (req, res) {

console.log('Generating presigned URL for ' + req.params.userId);

// Generate unique key for the new object

var key = uuid.v4();

// Record metadata in the DB, associate it with the user

// Construct a path where data will be stored in that bucket

var path = 'users/' + req.params.userId + '/objects/' + key;

// Construct a request

var request = {

Bucket: bucket,

Key: path,

Expires: 3600 // Valid for only 1 hour

};

// Ask

S3.getSignedUrl('putObject', request, function(err, result) {

res.send({

method: 'PUT',

url: result

});

});

})

app.listen(3000, function () {

console.log('Example app listening on port 3000!')

})The code above accepts requests from client in the form of POST requests to /users/objects routes, creates a new unique ID for an object, and returns to the client a JSON with the URL to upload the actual file. The link is valid only for one hour.

Obviously in a real production system we would not hard code accessKeyId/secretAccessKey pair within the application itself, but rather pass it to the application through environment variables or have it distributed through a config file which is not stored in our source control.

You might be tempted to go a step further and not use AWS Users at all, relying instead on AWS Roles which auto-rotate credentials automatically. While in general it is a very good practice, this might not work well in this particular case. The catch is you as a developer do not control when exactly role credential pairs are being rotated/expired, and as they do, so will the urls you create.

Uploading the file (iOS)

Below is the Swift code that uses the API endpoint to get the URL and then upload the raw file to that URL:

func uploadtoSignedUrl(_ data: Data, _ url: URL, _ method: String) {

var urlRequest = URLRequest.init(url: url, cachePolicy: .reloadIgnoringCacheData, timeoutInterval: 60);

urlRequest.httpMethod = method;

urlRequest.setValue("", forHTTPHeaderField: "Content-Type")

urlRequest.setValue("\(([UInt8](data)).count)", forHTTPHeaderField: "Content-Length")

urlRequest.setValue("iPhone-OS", forHTTPHeaderField: "User-Agent")

urlRequest.setValue("testImage", forHTTPHeaderField: "fileName")

URLSession.shared.uploadTask(with: urlRequest, from:data) { (data, response, error) in

if error != nil {

print(error!)

}else{

print(String.init(data: data!, encoding: .utf8)!);

}

}.resume();

}

func requestSignedUrl(completion: @escaping ((_ url: URL, _ method: String) -> Void)) {

var urlRequest = URLRequest.init(url: URL.init(string: "http://192.168.0.26:3000/users/testUser/objects")!, cachePolicy: .reloadIgnoringCacheData, timeoutInterval: 60);

urlRequest.httpMethod = "POST";

URLSession.shared.dataTask(with: urlRequest) { (data, response, error) in

if error != nil {

print(error!)

} else {

if let urlContent = data {

do {

let jsonResult = try JSONSerialization.jsonObject(with: urlContent, options:

JSONSerialization.ReadingOptions.mutableContainers)

if let jsonResult = jsonResult as? [String: Any] {

completion(URL.init(string: jsonResult["url"] as! String )!, jsonResult["method"] as! String)

}

} catch {

print("JSON parsing faild")

}

}

}

}.resume()

}And when we have the file to upload we would call this:

requestSignedUrl(completion: {(_ url: URL, _ method: String) -> Void in

uploadtoSignedUrl(data, url, method)

})Uploading the file (Android)

In our Android example we will be using okhttp library to achieve the same thing, it makes it much easier to work with http requests:

private class UploadTask extends AsyncTask<String, Void, AsyncTaskResult<Void>> {

private static final int NETWORK_TIMEOUT_SEC = 60;

private static final String OUR_SERVER_URL = "http://192.168.0.26:3000/users/testUser/objects";

@Override

protected AsyncTaskResult doInBackground(String... params) {

try {

// Obtain the url

OkHttpClient client = new OkHttpClient.Builder()

.connectionSpecs(Arrays.asList(ConnectionSpec.MODERN_TLS, ConnectionSpec.CLEARTEXT))

.connectTimeout(NETWORK_TIMEOUT_SEC, TimeUnit.SECONDS)

.readTimeout(NETWORK_TIMEOUT_SEC, TimeUnit.SECONDS)

.writeTimeout(NETWORK_TIMEOUT_SEC, TimeUnit.SECONDS)

.build();

Request getUrlRequest = new Request.Builder()

.url(OUR_SERVER_URL)

.post(RequestBody.create(MediaType.parse("text/plain"), ""))

.build();

Response getUrlResponse = client.newCall(getUrlRequest).execute();

if (!getUrlResponse.isSuccessful())

return new AsyncTaskResult(new Exception("Get url response code: " + getUrlResponse.code()));

String responseJsonString = getUrlResponse.body().string();

JSONObject getUrlResponseJson = new JSONObject(responseJsonString);

String url = getUrlResponseJson.getString("url");

// Upload the file

String imagePath = params[0];

Request uploadFileRequest = new Request.Builder()

.url(url)

.put(RequestBody.create(MediaType.parse(""), new File(imagePath)))

.build();

Response uploadResponse = client.newCall(uploadFileRequest).execute();

if (!uploadResponse.isSuccessful())

return new AsyncTaskResult(new Exception("Upload file response code: " + uploadResponse.code()));

return new AsyncTaskResult(null);

} catch (Exception e) {

return new AsyncTaskResult(e);

}

}

@Override

protected void onPostExecute(AsyncTaskResult result) {

super.onPostExecute(result);

if (result.hasError()) {

Log.e(TAG, "UploadTask failed", result.getError());

Toast.makeText(MainActivity.this, "UploadTask finished with error", Toast.LENGTH_LONG).show();

} else {

Toast.makeText(MainActivity.this, "UploadTask finished successfully", Toast.LENGTH_LONG).show();

}

}

}Handling the completion

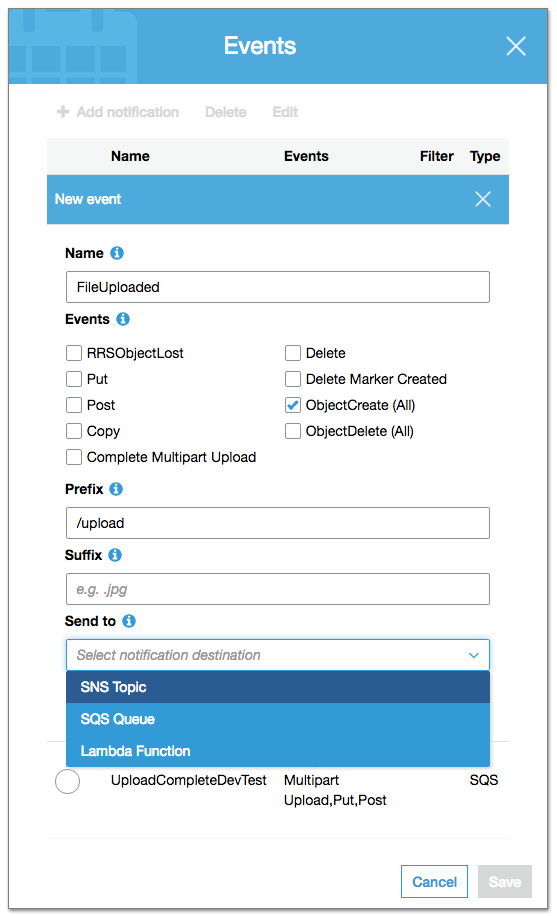

The only part that is left is notifying your backend once the file has been successfully uploaded. One option is to create another endpoint and to have client ping the server again to close the loop. That would mean another API call. Luckily, S3 can perform a custom action(event) for every object being created. The screen below show the available options. It can trigger a Lambda function, or it can send a message to SQS or SNS. Depending in our needs we can use one of these options to trigger further processing of the uploaded file, but that is outside the scope of this tutorial.

Summary

We’ve built a complete solution to securely and effeciently upload files directly to AWS S3. The complete source code of this example is hosted on GitHub for your convenience. The code includes both the node.js server as well as clients for iOS and Android.